CUPERTINO, Calif. — Apple’s iOS 16 update for iPhones, expected this fall, will expand a controversial “communications security” tool worldwide, which will use proprietary AI to “detect nudity” in text messages.

The worldwide expansion of this “message analysis” feature, currently available only in the U.S. and New Zealand, will begin in September, when iOS 16 is rolled out to the general public.

Models prior to the iPhone 8 will not be affected; for desktop models, the Ventura update will offer this option.

The “nudity detection” feature has been touted by Apple as part of their “Expanded Protections for Children” initiative, although privacy advocates have raised questions about the company’s overall approach to private content surveillance.

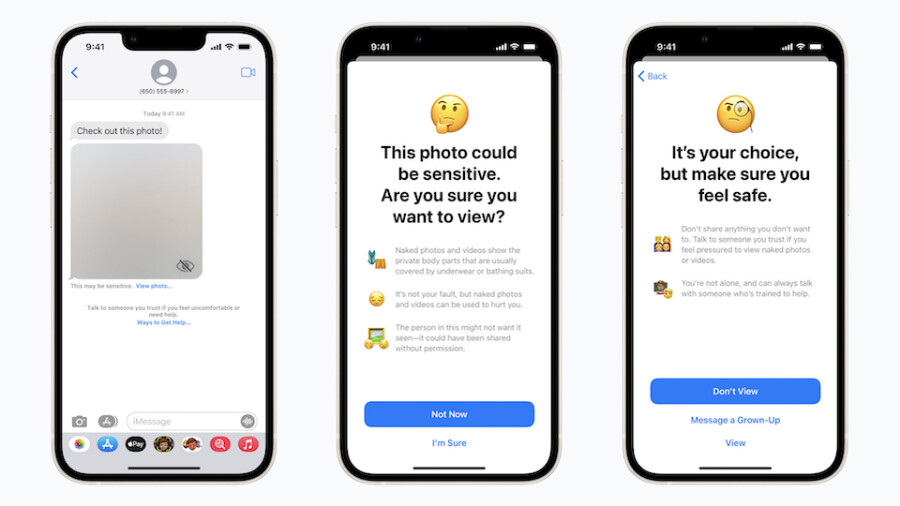

According to Apple, the feature is described as a Messages app tool to “warn children when receiving or sending photos that contain nudity.”

These features, Apple notes, are not enabled by default: “If parents opt in, these warnings will be turned on for the child accounts in their Family Sharing plan.”

When receiving “this type of content [nudity], the photo will be blurred and the child will be warned, presented with helpful resources, and reassured it is okay if they do not want to view this photo. Similar protections are available if a child attempts to send photos that contain nudity. In both cases, children are given the option to message someone they trust for help if they choose.”

The AI tool bundled with the default Messages apps, the company explained “analyzes image attachments and determines if a photo contains nudity, while maintaining the end-to-end encryption of the messages. The feature is designed so that no indication of the detection of nudity ever leaves the device.”

Apple alleges the company “does not get access to the messages, and no notifications are sent to the parent or anyone else.”

In the U.S. and New Zealand, this feature is included starting in iOS 15.2, iPadOS 15.2 and macOS 12.1 and it requires accounts set up as families in iCloud.

As French news outlet RTL noted today, when reporting the upcoming expansion of the feature, “a similar initiative, consisting of analyzing the images hosted on the photo libraries of users’ iCloud accounts in search of possible child pornography images, had been strongly criticized before being dismissed last year.”

As XBIZ reported, in September 2021 Apple announced that it would “pause” testing that new tool. The announced feature would have scanned images on users’ devices in search of supposed CSAM (Child Sexual Abuse Material) and sent reports directly to law enforcement.